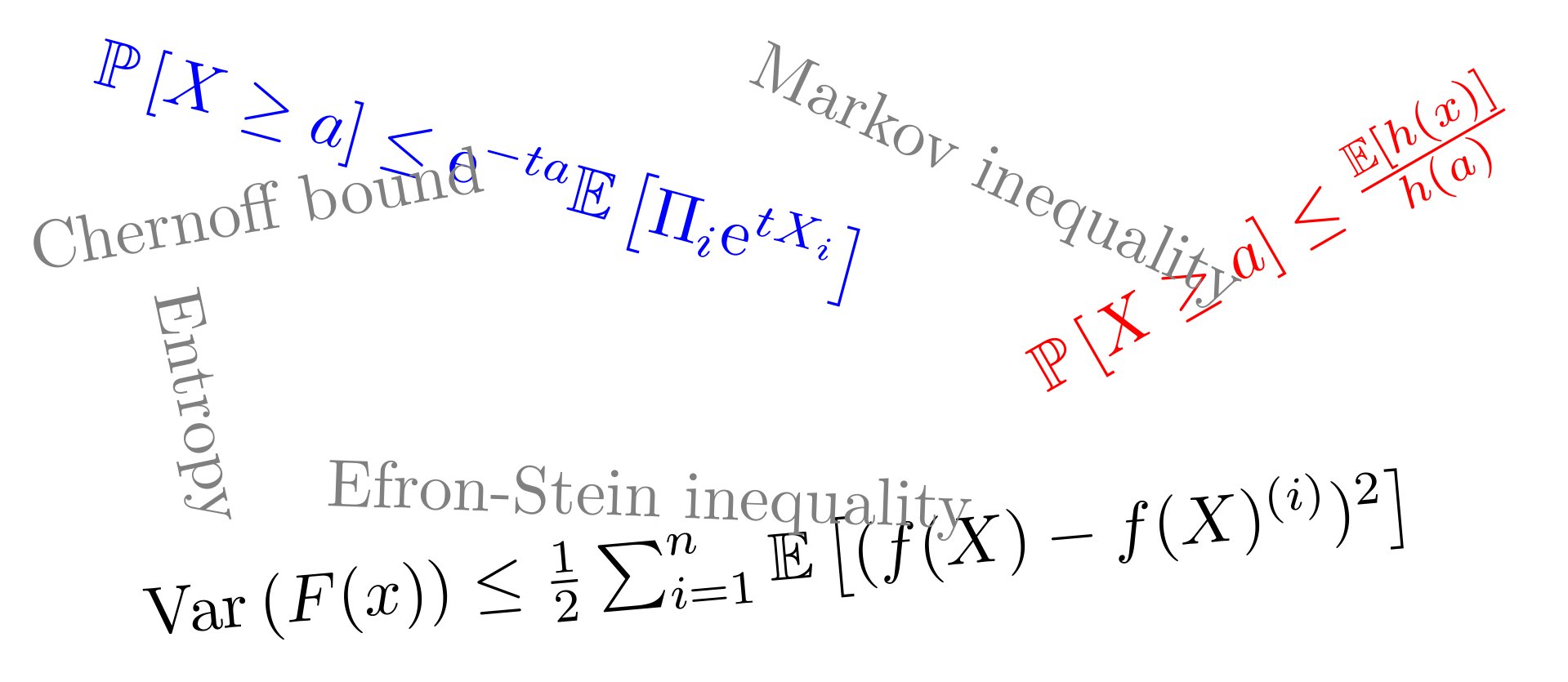

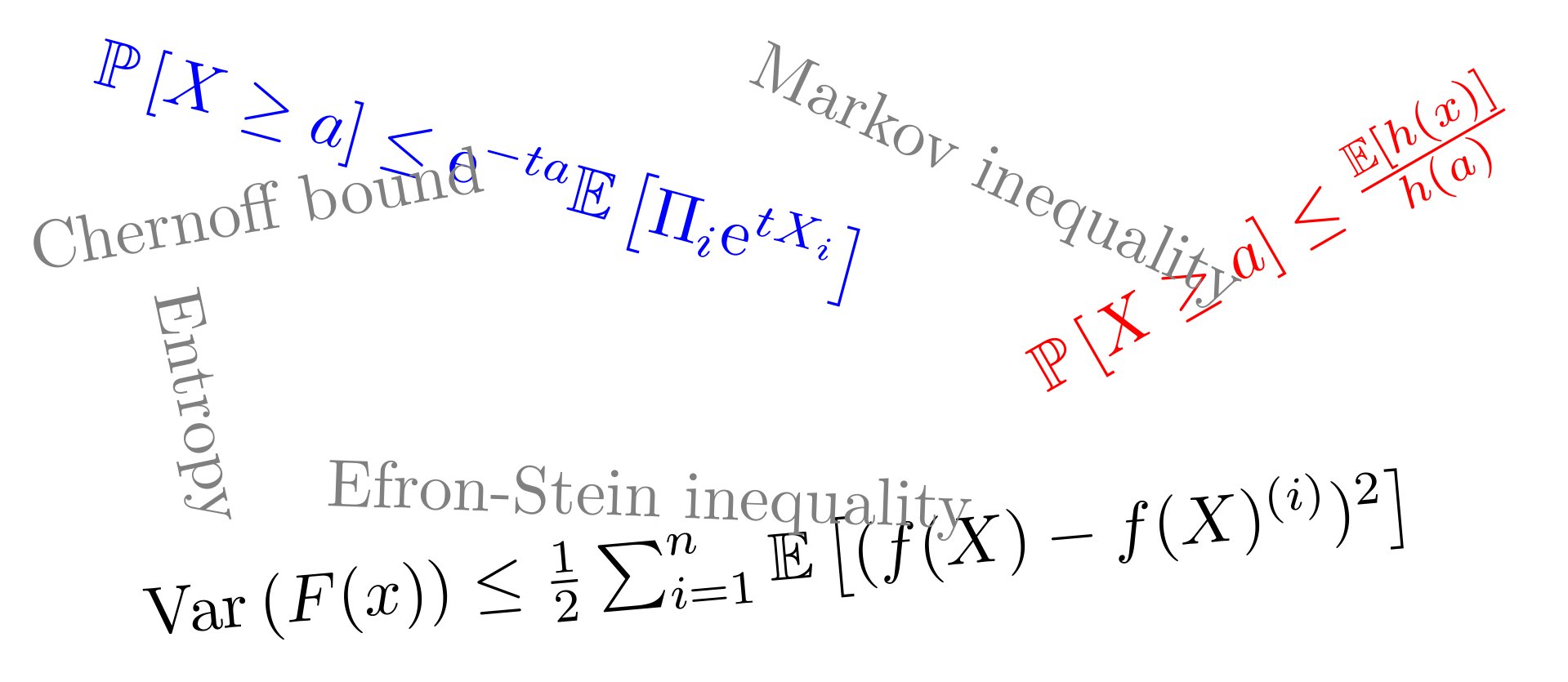

Concentration inequalities

Engineering Academy

Learn Without Limits: Free Engineering Courses

Pre-recorded video course. Watch anytime at your own pace.

FREE

Advanced course for professionals

Anytime Learning

Learn from Industry Expert

Career Option Guideline

Concentration inequalities

Why enroll

People join this course to build a strong theoretical foundation in probability that is essential for advanced studies and research in data science, machine learning, artificial intelligence, and applied mathematics. It is particularly valuable for students preparing for research-oriented careers, higher education, or competitive exams, as concentration inequalities are frequently used in analyzing algorithms and large-scale data behavior. Learners also benefit from understanding how uncertainty and randomness are controlled in real-world systems

Course content

The course is readily available, allowing learners to start and complete it at their own pace.

Concentration Inequality

26 Lectures

1209 min

mod01lec01 Why study concentration inequalities?

Preview

53 min

mod01lec02 Chernoff bound

29 min

mod01lec03 Examples of Chernoff bound for common distributions

40 min

mod02lec04 Hoeffding and Bernstein inequalities

41 min

mod03lec06 Bounding variance using the Efron-Stein inequality

58 min

mod03lec07 The Gaussian-Poincare inequality

33 min

mod03lec08 Tail bounds using the Efron-Stein inequality

47 min

mod04lec09 Herbst's argument and the entropy method

46 min

mod04lec10 Log-Sobolev inequalities

52 min

mod04lec11 Binary and Gaussian Log-Sobolev inequalities and concentration

52 min

mod05lec12 Variational formulae forKullback-Leibler and Bregman Divergence

42 min

mod02lec05 Azuma and McDiarmid inequalities

52 min

mod05lec13 A modified log-Sobolev inequality and concentration

28 min

mod05lec14 Introduction to the transportation method for showing concentration bounds

65 min

mod05lec15 Transportationlemma and a proof of McDiarmid's inequality using the transportation method

42 min

mod06lec16 Concentration bounds for functions beyond bounded difference using transportation method

32 min

mod06lec17 Marton's conditional transportation cost inequality

44 min

mod06lec18 Isoperimetry and concentration of measure

35 min

mod06lec19 Isoperimetry and bounded difference

23 min

mod07lec20 Equivalence of Stam's inequality and log Sobolev inequality

48 min

mod07lec21 An information theoretic proof of log Sobolev inequality

40 min

mod07lec22 Hypercontractivity and strong data processing inequality for Rényi divergence

67 min

mod07lec23 An information theoretic characterization of hypercontractivity

47 min

mod07lec24 Equivalence of Gaussian hypercontractivity and Gaussian log Sobolev inequality

72 min

mod08lec25 Uniform deviation bounds for random walks and the law of the iterated logarithm

67 min

mod08lec26 Self normalized concentration inequalities and application to online regression

54 min

Course details

The NPTEL course on Concentration Inequalities introduces powerful mathematical tools used to analyze how random variables deviate from their expected values. The course focuses on probabilistic bounds that quantify the likelihood of large deviations in random processes. These inequalities form the backbone of modern probability theory and are widely used in statistics, machine learning, randomized algorithms, and data science to provide theoretical performance guarantees.

SOURCE - NPTEL [YOUTUBE]

Course suitable for

Telecommunication Electronics & Telecommunication Instrumentation

Key topics covered

Review of probability theory and random variables

Markov and Chebyshev inequalities

Hoeffding’s inequality

Chernoff and Bernstein bounds

Azuma–Hoeffding inequality and martingales

McDiarmid’s inequality

Sub-Gaussian and sub-exponential random variables

Applications in machine learning and randomized algorithms

High-dimensional probability concepts

FREE

Access anytime